This paper is a starting point for an entire business model ecosystem designed to distribute of latency and loss sensitive Internet objects. For a set of premium content producers, there is a willingness to pay a little more for a guaranteed high-quality end-user experience. For some Internet Service Providers, there is a willingness to expend resources if there is a corresponding increase in revenue. What is needed is an ecosystem that supports both of these goals.

An overlay network called a "Media Grid" is introduced in this paper. A revenue allocation model is introduced along with two scenarios; before caching, and when the object is served entirely out of last mile cache. These scenarios are discussed with the goal of aligning the interests of all of those participating in the cooperatively managed video delivery ecosystem.

This work was funded in part by nuMetra and the InterStream Association in 2009.

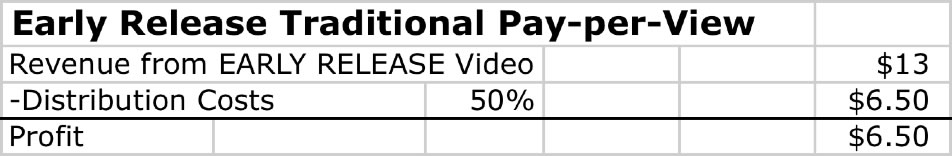

Paramount offers an early release Pay-Per-View Movie Premiere. Let's assume that Paramount advertises an opening night pay-per-view movie for $13 on the cable system or on a subscribers Internet service, and the end-user clicks on the movie to purchase it. (The numbers used in this paper are used solely for illustration purposes and do not represent actual negotiated rates).

With the traditional pay-per-view cable revenue split model, Paramount would typically receive 50% of the revenue from the movie, and the rest would go to distribution chain (the cable company), about $6.50 per video.

(Editor's note: Here is The Cooperatively Managed CDN Spreadsheet.)

In this model, the cable company essentially resells the studio content, so the studio doesn't have a direct relationship with the customer. The studios said that there were a couple of reasons for this:

1) They wanted a bigger piece of the pie, and

2) They wanted to market related products and services to their customers (screensavers, video games, bonus features, sequels, fan sites, subscriptions, etc.)

Some studios are seeking a more direct end user relationship, using an Internet portal or a proxy (Hulu, for example) to get their content to households. Let's explore the revenue flow in this best-effort Internet distribution model.

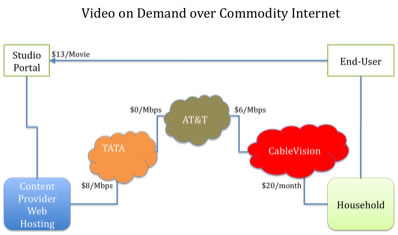

Over the best-effort commodity Internet, the studio pays for Internet access from an Internet Service Provider (ISP) and/or a CDN paying on a metered basis. The upstream ISP or CDN would in turn pay for distribution as needed to their upstream providers along the distribution chain for best effort service. The revenue flow might look something like this:

After the end-user clicks to buy this $13 (4GB) movie, the packetized movie goes from the Paramount Internet channel web site to its upstream ISP (Tata) at a metered rate of $8/Mbps. Tata in turn sends that traffic through its peer (AT&T) for free , who in turn transports those bits to Cablevision (charging Cablevision $6/Mbps), who in turn charges a flat rate to its customer of $20/month. [In the diagram, the revenue figure is shown closest to the party receiving the revenue.]

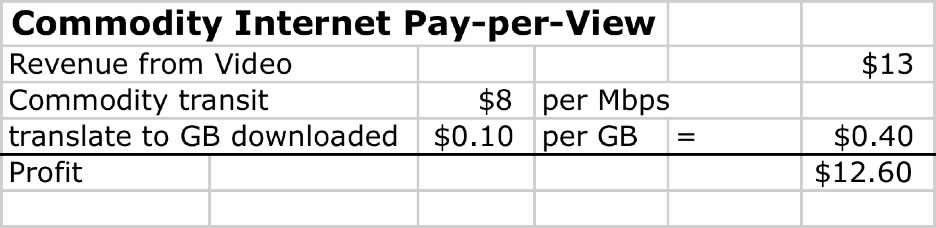

Let's assume that the movie is 4GB in size, and at $8 per Mbps it costs $0.10 per GB, or $0.40 per movie to deliver . Under these assumptions, distributing this movie over the commodity Internet provides Paramount with profits of $13.00 -$0.40 =$12.60 per movie as compared with $6.50 per movie of revenue under traditional revenue sharing model.

So there is a larger profit margin here - this is certainly an interesting proposition but in reality is fraught with challenges.

First, the best effort Internet service may be good enough for highly compressed standard definition video distributed under no-loss conditions, but for large long-lived streams under conditions of network stress, the end-user experience can be perceived as quite poor . From the content provider perspective, anything but a perfect end-user experience is unacceptable, as it tends to result in increased credit card charge backs, los of goodwill and loss of revenue.

Second, with free peering relationships specifically, and with the global Internet in general, there is often little incentive for upgrading the infrastructure that does not directly lead to incremental revenue. This lack of alignment is one reason why we see congested peering links and so many inter-provider peering disputes.

Finally, the best effort transit service does not scale well for content with unpredictable demand characteristics. Content Distribution Networks scale much better but can only serve up content when their path is selected. We have heard stories of ISPs preferring a more circuitous traffic path to maintain their peering traffic ratios with their peers. As a result, the shortest path from content to a CDN is sometimes not used to the frustration of the content provider and eyeballs alike. To solve this problem, one needs to actually embed the content within the last mile.

To fully realize the incremental revenue from direct over-the-Internet video sales, and to avoid these issues, producers of premium content recognize that it may make sense to pay a little more money for a better end-user experience.

In this final scenario, Paramount is willing to pay Tata a little extra for a high-quality "Media Grid" service, a kind of "diamond lane" for the Internet.

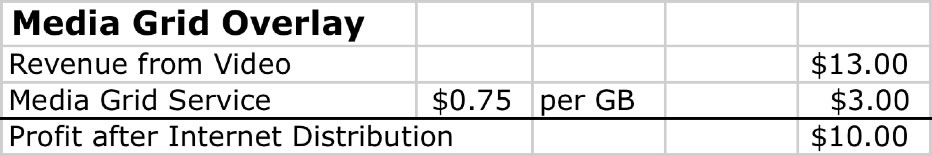

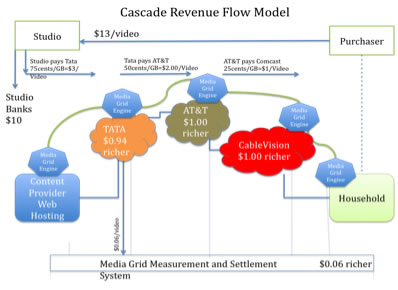

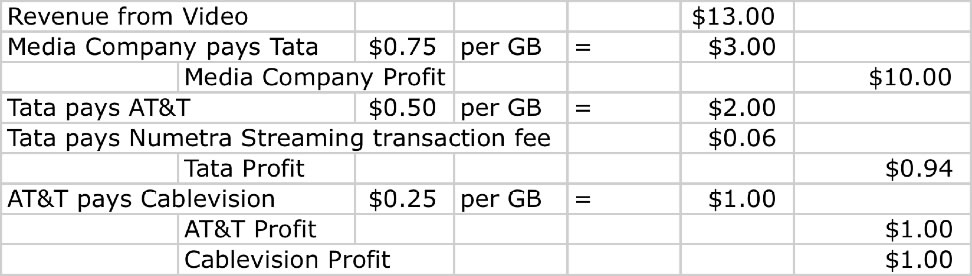

Let's talk about how this might work. Let's assume that Tata offers Media Grid Service to Paramount for a fee of 75 cents per GB, or $3 in total to deliver the movie over the media grid as shown below.

From the Paramount perspective, it is still more profitable than the traditional cable company revenue share model.

Let's take a look into the Media Grid Overlay network to see how the packets and revenue flow.

Media Grid. The "Media Grid" is a global ecosystem consisting of network devices embedded within the networks between content provider and end-user. Each of these embedded network devices are called "Media Grid Engines", and are dynamically connected to one another across the underlying networks. Think of the Media Grid overlay as a cooperatively managed Content Distribution Network, with guaranteed, measured and audited service characteristics, and with each participant having a financial stake in the successful delivery of their premium content.

Traffic Flow. In the Media Grid model, the traffic is delivered from Paramount to the first Media Grid Engine (the origin server) in the path, and from there, across to the Media Grid Engines along the chain of intermediary ISPs. When the traffic reaches the last media grid engine, the content is buffered (and potentially cached) for delivery to the eyeballs that ordered the movie.

Revenue Flow. The revenue (for successfully delivered content) is distributed across the operators of media grid engines in the path. How is the revenue allocated across the participating members of the ecosystem? There are many possible solutions for the community to discuss – here is one possible solution.

In the scenario, Tata charges its customer $0.75 per GB, or $3 for the media grid distribution of its premium movie. Tata has in turn negotiated Media Grid Services from its peer (AT&T) for 50 cents per GB, or $2.00 per movie. AT&T has negotiate its Media Grid Service with Cablevision in this example for 25 cents per GB, so AT&T pays Cablevision $1 per movie. And finally, the video is delivered to the end-user.

The Media Grid Measurement and Settlement System operator (shown on the bottom of the diagram) receives $0.06 per video stream from Tata in return for monitoring the Media Grid Engines and generating the settlement statements on behalf of the participants in the ecosystem.

Implications. We accomplish the goal of allocating revenue to where the infrastructure is most heavily used. Tata gets a chunk of the revenue for sourcing the deal to the media grid, and AT&T and Cablevision gets incremental revenue for delivering the content over their diamond lanes. The interests and incentives here are aligned; Tata, AT&T and Cablevision all receive incremental revenue, but only upon the successful delivery of the content. If there are problems along the path, all are incented to fix them in order to gain the incremental revenue.

Optimization. In a live streaming scenario, all participants are participating in the cost of delivery, but in a static movie download scenario, the content can be cached. When the content is cached in the Cablevision Media Grid Engine for example, the load on Tata and AT&T is potentially zero. Should AT&T and Tata get their chunk of the revenue if they don't do anything towards the successful delivery of the movie?

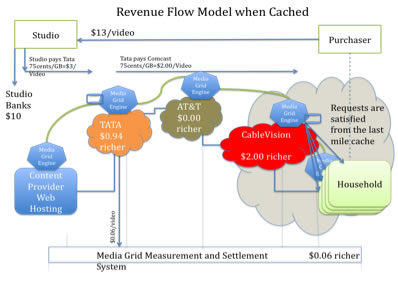

Let's explore a straw man revenue model for dealing with cached content delivery.

In this scenario, revenue is proportional to the cache hits; everyone along the path is compensated for the first distribution, and after that, only when their caches are hit. This incents big caches at the edge for popular content, and the widest dissemination of caches to increase cache hits and corresponding revenue.

Let's walk through the example to demonstrate this.

Traffic Flow. Assume that Paramount has already uploaded the video to the Tata Media Grid Engine (origin server). Assume further that the first copy of the video has propagated to the last mile Media Grid Engine (cache).

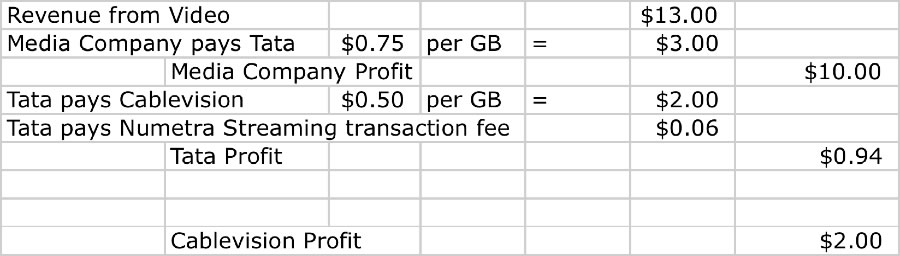

Revenue Flow. In this model, the origin Media Grid Engine operator (Tata) charges Paramount for each movie and the last mile operator is paid the remainder $3.00 for serving the content to the eyeballs on behalf of Tata. Since AT&T has no cache hit in this scenario it doesn't receive any revenue, and incurs no incremental bandwidth cost.

Implication. There is $0 load on Tata, so why does Tata get a chunk of revenue? Two reasons. First, Tata is operating the origin server (which provides the server of last resort), and second, for originating the customer content onto the media grid. Tata again pays the Streaming Settlement fees of $0.06.

The last mile operator gets the largest chunk of revenue for serving the content out of their cache, thus incenting them the most to increase the performance of the last mile for their share of the associated revenue.

Discussion Needed. We need to discuss the mechanism for how the market determines the allocation of revenue between the origin and the last mile cache. One system would have Tata getting the same amount for every successful movie, and the last mile gets everything left. Another would be for the last mile guys to get the same amount no matter what, and the originator of the sale gets everything else. The goal needs to be an open market solution where the dollars flow to bottlenecks.

There are several mechanisms to provide guaranteed high quality service to the customer base.

First, since the popular content will most likely be cached in Media Grid Engines located within the last mile, the content has fewer router hops to travel, so fewer places in which congestion may occur.

Secondly, we expect the last mile providers will be motivated to upgrade their infrastructure (or are already upgrading their infrastructure) in order to realize the incremental revenue described here.

Third, there is real incentive to prioritize these high-priority bits that will generate incremental revenue over those that are sold today as part of a best-effort Internet Service. We expect queue prioritization and similar solutions will be employed in hardware to maximize the likelihood of realizing the incremental revenue.

Finally, there are mechanisms within the Media Grid Engines to strategically time the injection of traffic such that it misses the encountered congestion along the path.

Do these mechanisms for prioritizing traffic and missing the bottlenecks create a clash with Net Neutrality advocates? Not really.

There are several definitions of Net Neutrality. We will use the definition that Net Neutrality means that any prioritized service should be priced and made available in an open, transparent, and non-discriminatory way. By working with the ecosystem participants, the media grid association defines and stands behind the association defined prioritization system. This is a much stronger and more defensible net neutrality stance.

The machinery to make this work is behind the scenes, a kind of Visa measurement, auditing, and settlement and archival system for Internet streams across the media grid.

Inside each Media Grid Engine are meters that constantly monitor and report the performance of the streams going through it. The results are fed back to a settlement system that issues periodic settlement reports to all intermediary ISPs. In this scenario, Tata would purchase streaming media credits from nuMetra for a price of say 6 cents per stream in return for nuMetra handling these settlement functions.

The InterStream Association is the participant body that directs the Media Grid ecosystem, establishing and evolving settlement rules, inter-provider performance standards, equipment certification standards, archival system rules, equipment upgrade and maintenance schedule, etc. for the operation of the ecosystem. It is within this open industry forum that the media grid ecosystem will evolve.

The Media Grid Ecosystem as described provides content providers with "diamond lane access" for its high quality streams. Mechanisms are intrinsic in the system to incent all players in the path of the streaming to upgrade their infrastructure to gain incremental revenue.

The big winners in this effort are the last mile providers, who, as a result of caching, play the strongest role in the collective ecosystem, and will realize the most incremental revenue as a side effect.

But really, the entire ecosystem wins since there is at last a way for those willing to pay for high quality access to the eyeballs, to get that access as required by their application, and those who provide their bandwidth to this end to participate in the revenue flow.

This discussion paper describes an ecosystem in general terms, using illustrative numbers to best illustrate the ecosystem.

Readers suggest:

This paper is the result of a lot of thinking by Jeff Turner, Scott Landman, and the team at nuMetra. Great feedback and suggestions came from countless anonymous contributors and many folks in the peering community, Keoki Andrus, Ken Florence (NetFlix), Peter Harrison, Ren Provo, Richard Steenbergen (nLayer).

Internet Transit Pricing Historical and Projections

Index of other white papers on peering

WIlliam B. Norton is the author of The Internet Peering Playbook: Connecting to the Core of the Internet, a highly sought after public speaker, and an international recognized expert on Internet Peering. He is currently employed as the Chief Strategy Officer and VP of Business Development for IIX, a peering solutions provider. He also maintains his position as Executive Director for DrPeering.net, a leading Internet Peering portal. With over twenty years of experience in the Internet operations arena, Mr. Norton focuses his attention on sharing his knowledge with the broader community in the form of presentations, Internet white papers, and most recently, in book form.

From 1998-2008, Mr. Norton’s title was Co-Founder and Chief Technical Liaison for Equinix, a global Internet data center and colocation provider. From startup to IPO and until 2008 when the market cap for Equinix was $3.6B, Mr. Norton spent 90% of his time working closely with the peering coordinator community. As an established thought leader, his focus was on building a critical mass of carriers, ISPs and content providers. At the same time, he documented the core values that Internet Peering provides, specifically, the Peering Break-Even Point and Estimating the Value of an Internet Exchange.

To this end, he created the white paper process, identifying interesting and important Internet Peering operations topics, and documenting what he learned from the peering community. He published and presented his research white papers in over 100 international operations and research forums. These activities helped establish the relationships necessary to attract the set of Tier 1 ISPs, Tier 2 ISPs, Cable Companies, and Content Providers necessary for a healthy Internet Exchange Point ecosystem.

Mr. Norton developed the first business plan for the North American Network Operator's Group (NANOG), the Operations forum for the North American Internet. He was chair of NANOG from May 1995 to June 1998 and was elected to the first NANOG Steering Committee post-NANOG revolution.

William B. Norton received his Computer Science degree from State University of New York Potsdam in 1986 and his MBA from the Michigan Business School in 1998.

Read his monthly newsletter: http://Ask.DrPeering.net or e-mail: wbn (at) TheCoreOfTheInter (dot) net

Click here for Industry Leadership and a reference list of public speaking engagements and here for a complete list of authored documents

The Peering White Papers are based on conversations with hundreds of Peering Coordinators and have gone through a validation process involving walking through the papers with hundreds of Peering Coordinators in public and private sessions.

While the price points cited in these papers are volatile and therefore out-of-date almost immediately, the definitions, the concepts and the logic remains valid.

If you have questions or comments, or would like a walk through any of the paper, please feel free to send email to consultants at DrPeering dot net

Please provide us with feedback on this white paper. Did you find it helpful? Were there errors or suggestions? Please tell us what you think using the form below.

Contact us by calling +1.650-614-5135 or sending e-mail to info (at) DrPeering.net